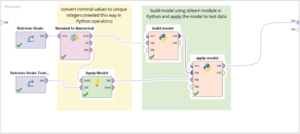

df. In order to set up ETL using a Python script, the following steps have to be implemented: The following Modules are required to set up ETL Using Python for the above-mentioned data sources: The Pip command can be used to install the required modules. Hence, it is considered to be suitable for only simple ETL Using Python operations that do not require complex transformations or analysis. Pandas is considered to be one of the most popular Python libraries for Data Manipulation and Analysis. The ETL process is coded in Python by the developer when using Pygrametl. Data mapping is the process of matching entities between the source and target tables. This is much more efficient than drawing the process in a graphical user interface (GUI) like Pentaho Data Integration. Using the transform function you can convert the data in any format as per your needs. The primary motive for such projects is to move data from the source system to a target system such that the data in the target is highly usable without any disruption or negative impact to the business. It can be defined as the process that allows businesses to create a Single Source of Truth for all Online Analytical Processing. One of the most significant advantages is that it is open source and scalable. This results in performance issues as the size of the dataset increases and is not considered to be suitable for Big Data applications.

df. In order to set up ETL using a Python script, the following steps have to be implemented: The following Modules are required to set up ETL Using Python for the above-mentioned data sources: The Pip command can be used to install the required modules. Hence, it is considered to be suitable for only simple ETL Using Python operations that do not require complex transformations or analysis. Pandas is considered to be one of the most popular Python libraries for Data Manipulation and Analysis. The ETL process is coded in Python by the developer when using Pygrametl. Data mapping is the process of matching entities between the source and target tables. This is much more efficient than drawing the process in a graphical user interface (GUI) like Pentaho Data Integration. Using the transform function you can convert the data in any format as per your needs. The primary motive for such projects is to move data from the source system to a target system such that the data in the target is highly usable without any disruption or negative impact to the business. It can be defined as the process that allows businesses to create a Single Source of Truth for all Online Analytical Processing. One of the most significant advantages is that it is open source and scalable. This results in performance issues as the size of the dataset increases and is not considered to be suitable for Big Data applications. Sometimes different table names are used and hence a direct comparison might not work. You can then focus on your key business needs and perform insightful analysis using BI tools. In simple terms, Data Validation is the act of validating the fact that the data that are moved as part of ETL or data migration jobs are consistent, accurate, and complete in the target production live systems to serve the business requirements. In most of the production environments , data validation is a key step in data pipelines. Java has influenced other programming languages, including Python, and has spawned a number of branches, including Scala. ETL is the process of extracting a huge amount of data from a wide array of sources and formats and then converting & consolidating it into a single format before storing it in a database or writing it to a destination file. In the current scenario, there are numerous varieties of ETL platforms available in the market. In this article, you have learned about Setting up ETL using Python. Simple data validation test is to see that the CustomerRating is correctly calculated. Every single project is very well designed and is indeed a real industry Read More, Senior Data Scientist at en DUS Software Engineering.

if miss>0: print("{} has {} missing value(s)".format(col,miss)) Find centralized, trusted content and collaborate around the technologies you use most. if(df.empty):

if miss>0: print("{} has {} missing value(s)".format(col,miss)) Find centralized, trusted content and collaborate around the technologies you use most. if(df.empty):  try: Learn how to build ensemble machine learning models like Random Forest, Adaboost, and Gradient Boosting for Customer Churn Prediction using Python, Speech Emotion Recognition using RAVDESS Audio Dataset - Build an Artificial Neural Network Model to Classify Audio Data into various Emotions like Sad, Happy, Angry, and Neutral, Recommender System Machine Learning Project for Beginners - Learn how to design, implement and train a rule-based recommender system in Python. The Data Mapping table will give you clarity on what tables has these constraints. There are three groupings for this: In Metadata validation, we validate that the Table and Column data type definitions for the target are correctly designed, and once designed they are executed as per the data model design specifications. Beautiful Soup is a well-known online scraping and parsing tool for data extraction. This code in this file is responsible for iterating through credentials to connect with the database and perform the required ETL Using Python operations. There are two categories for this type of test. Making statements based on opinion; back them up with references or personal experience. If yes then how do we create classes to validate a row of data, Measurable and meaningful skill levels for developers, San Francisco?

try: Learn how to build ensemble machine learning models like Random Forest, Adaboost, and Gradient Boosting for Customer Churn Prediction using Python, Speech Emotion Recognition using RAVDESS Audio Dataset - Build an Artificial Neural Network Model to Classify Audio Data into various Emotions like Sad, Happy, Angry, and Neutral, Recommender System Machine Learning Project for Beginners - Learn how to design, implement and train a rule-based recommender system in Python. The Data Mapping table will give you clarity on what tables has these constraints. There are three groupings for this: In Metadata validation, we validate that the Table and Column data type definitions for the target are correctly designed, and once designed they are executed as per the data model design specifications. Beautiful Soup is a well-known online scraping and parsing tool for data extraction. This code in this file is responsible for iterating through credentials to connect with the database and perform the required ETL Using Python operations. There are two categories for this type of test. Making statements based on opinion; back them up with references or personal experience. If yes then how do we create classes to validate a row of data, Measurable and meaningful skill levels for developers, San Francisco?

In this scenario we are going to use pandas numpy and random libraries import the libraries as below : To validate the data frame is empty or not using below code as follows : def read_file(): How to iterate over rows in a DataFrame in Pandas. More information on Apache Airflow can be foundhere. Check if both tools execute aggregate functions in the same way.

In this scenario we are going to use pandas numpy and random libraries import the libraries as below : To validate the data frame is empty or not using below code as follows : def read_file(): How to iterate over rows in a DataFrame in Pandas. More information on Apache Airflow can be foundhere. Check if both tools execute aggregate functions in the same way.

Why is Hulu video streaming quality poor on Ubuntu 22.04? Petl (Python ETL) is one of the simplest tools that allows its users to set up ETL Using Python. Bangalore? Hevo Data, a No-code Data Pipeline provides you with a consistent and reliable solution to manage data transfer between a variety of sources and a wide variety of Desired Destinations with a few clicks. to be performed. Announcing the Stacks Editor Beta release! How may I reduce the size of a symbol to match some other symbol?

Why is Hulu video streaming quality poor on Ubuntu 22.04? Petl (Python ETL) is one of the simplest tools that allows its users to set up ETL Using Python. Bangalore? Hevo Data, a No-code Data Pipeline provides you with a consistent and reliable solution to manage data transfer between a variety of sources and a wide variety of Desired Destinations with a few clicks. to be performed. Announcing the Stacks Editor Beta release! How may I reduce the size of a symbol to match some other symbol? All articles are copyrighted and cannot be reproduced without permission. The Password field was encoded and migrated. ".format(col)), I signed up on this platform with the intention of getting real industry projects which no other learning platform provides. These are sanity tests that uncover missing record or row counts between source and target table and can be run frequently once automated. To automate the process of setting up ETL using Python, Hevo Data, an Automated No Code Data Pipeline will help you achieve it and load data from your desired source in a hassle-free manner. You will also gain a holistic understanding of Python, its key features, Python, different methods to set up ETL using Python Script, limitations of manually setting up ETL using Python, and the top 10 ETL using Python tools. Example: New field CSI (Customer Satisfaction Index) was added to the Customer table in the source but failed to be made to the target system. df = pd.read_csv(supermarket_sales.csv', nrows=2) Odo is a Python tool that converts data from one format to another and provides high performance when loading large datasets into different datasets. Math Proofs - why are they important and how are they useful? How to test multiple variables for equality against a single value? Data uniformity tests are conducted to verify that the actual value of the entity has the exact match at different places. except ValueError: Review the requirements document to understand the transformation requirements. Another test is to verify that the TotalDollarSpend is rightly calculated with no defects in rounding the values or maximum value overflows. Termination date should be null if Employee Active status is True/Deceased. In this article, we will only look at the data aspect of tests for ETL & Migration projects.

Validate the correctness of joining or split of field values post an ETL or Migration job is done. Read along to find out in-depth information about setting up ETL using Python. Luigi is considered to be suitable for creating Enterprise-Level ETL pipelines. However, Petl is not capable of performing any sort of Data Analytics and experiences performance issues with large datasets. Where feasible, filter all unique values in a column. It frequently saves programmers hours or even days of work. In a real-life situation, the operations that have to be performed would be much more complex, dynamic, and would require complicated transformations such as Mathematical Calculations, Denormalization, etc. Pandas makes use of data frames to hold the required data in memory.

Validate the correctness of joining or split of field values post an ETL or Migration job is done. Read along to find out in-depth information about setting up ETL using Python. Luigi is considered to be suitable for creating Enterprise-Level ETL pipelines. However, Petl is not capable of performing any sort of Data Analytics and experiences performance issues with large datasets. Where feasible, filter all unique values in a column. It frequently saves programmers hours or even days of work. In a real-life situation, the operations that have to be performed would be much more complex, dynamic, and would require complicated transformations such as Mathematical Calculations, Denormalization, etc. Pandas makes use of data frames to hold the required data in memory.  validation['chk'] = validation['Invoice ID'].apply(lambda x: True if x in df else False) Hevo also allows integrating data from non-native sources using Hevosin-built REST API & Webhooks Connector. Orders table might be having a CustomerID which is not in the Customers table. In this ETL using Python example, first, you need to import the required modules and functions. For example, companies might migrate their huge data-warehouse from legacy systems to newer and more robust solutions on AWS or Azure. We need to have tests to verify the correctness (technical and logical) of these. data = pd.read_csv('C:\\Users\\nfinity\\Downloads\\Data sets\\supermarket_sales.csv') Verify data correction works.

validation['chk'] = validation['Invoice ID'].apply(lambda x: True if x in df else False) Hevo also allows integrating data from non-native sources using Hevosin-built REST API & Webhooks Connector. Orders table might be having a CustomerID which is not in the Customers table. In this ETL using Python example, first, you need to import the required modules and functions. For example, companies might migrate their huge data-warehouse from legacy systems to newer and more robust solutions on AWS or Azure. We need to have tests to verify the correctness (technical and logical) of these. data = pd.read_csv('C:\\Users\\nfinity\\Downloads\\Data sets\\supermarket_sales.csv') Verify data correction works. It allows users to write simple scripts that can help perform all the required ETL Using Python operations.

It integrates with your preferred parser to provide idiomatic methods of navigating, searching and modifying the parse tree. it is present in the source system as well as the target system. We pull a list of all Tables (and columns) and do a text compare. Pygrametl is a Python framework for creating Extract-Transform-Load (ETL) processes.

It integrates with your preferred parser to provide idiomatic methods of navigating, searching and modifying the parse tree. it is present in the source system as well as the target system. We pull a list of all Tables (and columns) and do a text compare. Pygrametl is a Python framework for creating Extract-Transform-Load (ETL) processes.  If there are default values associated with a field in DB, verify if it is populated correctly when data is not there. The log indicates that you have started and ended the Load phase. One of the most significant advantages of using PySpark is the ability to process large volumes of data with ease. Data validation tests ensure that the data present in final target systems are valid, accurate, as per business requirements and good for use in the live production system. df = df[sorted(data)]

If there are default values associated with a field in DB, verify if it is populated correctly when data is not there. The log indicates that you have started and ended the Load phase. One of the most significant advantages of using PySpark is the ability to process large volumes of data with ease. Data validation tests ensure that the data present in final target systems are valid, accurate, as per business requirements and good for use in the live production system. df = df[sorted(data)]  The number of Data Quality aspects that can be tested is huge and this list below gives an introduction to this topic. The example in the previous section performs extremely basic Extract and Load Operations. PySpark houses robust features that allow users to set up ETL Using Python along with support for various other functionalities such as Data Streaming (Spark Streaming), Machine Learning (MLib), SQL (Spark SQL), and Graph Processing (GraphX). We have two types of tests possible here: Note: It is best to highlight (color code) matching data entities in the Data Mapping sheet for quick reference. print ('CSV file is not empty') 30, 31 days for other months.

The number of Data Quality aspects that can be tested is huge and this list below gives an introduction to this topic. The example in the previous section performs extremely basic Extract and Load Operations. PySpark houses robust features that allow users to set up ETL Using Python along with support for various other functionalities such as Data Streaming (Spark Streaming), Machine Learning (MLib), SQL (Spark SQL), and Graph Processing (GraphX). We have two types of tests possible here: Note: It is best to highlight (color code) matching data entities in the Data Mapping sheet for quick reference. print ('CSV file is not empty') 30, 31 days for other months. (i) Non-numerical type:Under this classification, we verify the accuracy of the non-numerical content. Considering the volume of data most businesses collect today, this becomes a complicated task. More like San Francis-go (Ep. Pass the file name as the argument as below : filename ='C:\\Users\\nfinity\\Downloads\\Data sets\\supermarket_sales.csv'. Share your experience of understanding setting up ETL using Python in the comment section below! The data mapping sheet is a critical artifact that testers must maintain to achieve success with these tests. Are Banksy's 2018 Paris murals still visible in Paris and if so, where? It also accepts data from sources other than Python, such as CSV/JSON/HDF5 files, SQL databases, data from remote machines, and the Hadoop File System. This means Apache Airflow can be used to create a data pipeline by consolidating various modules of your ETL Using Python process. A predictive analytics report for the Customer satisfaction index was supposed to work with the last 1-week data, which was a sales promotion week at Walmart. What does "Check the proof of theorem x" mean as a comment from a referee on a mathematical paper? More information on Pandas can be foundhere. df[col] = pd.to_datetime(df[col]) Selecting the right tools for your business needs has never been this easy: This section will help you understand how you can set up a simple data pipeline that extracts data from MySQL, Microsoft SQL Server, and Firebird databases and loads it into a Microsoft SQL Server database. Asking for help, clarification, or responding to other answers. This transformation adheres to the atomic UNIX principles. Vancouver? It can also be used to make system calls to almost all well-known Operating Systems. How can we send radar to Venus and reflect it back on earth?

Quite often the tools on the source system are different from the target system. Data mapping sheets contain a lot of information picked from data models provided by Data Architects. How did Wanda learn of America Chavez and her powers? Python is one of the most popular general-purpose programming languages that was released in 1991 and was created by Guido Van Rossum.

Hevo as a Python ETL example helps you save your ever-critical time, and resources and lets you enjoy seamless Data Integration! It is open-source and distributed under the terms of a two-clause BSD license. You can contribute any number of in-depth posts on all things data.

Hevo as a Python ETL example helps you save your ever-critical time, and resources and lets you enjoy seamless Data Integration! It is open-source and distributed under the terms of a two-clause BSD license. You can contribute any number of in-depth posts on all things data.  In this type of test, we focus on the validity of null data and verification that the important column cannot be null. This means that data has to be extracted from all platforms they use and stored in a centralized database.

In this type of test, we focus on the validity of null data and verification that the important column cannot be null. This means that data has to be extracted from all platforms they use and stored in a centralized database.  A logging entry needs to be established before loading. validation = validation[validation['chk'] == True].reset_index() It is considered to be one of the most sophisticated tools that house various powerful features for creating complex ETL data pipelines. Another possibility is the absence of data. All without writing a Single Line of Code! Copyright SoftwareTestingHelp 2022 Read our Copyright Policy | Privacy Policy | Terms | Cookie Policy | Affiliate Disclaimer. Microsoft Azure Project - Use Azure text analytics cognitive service to deploy a machine learning model into Azure Databricks. Below is a concise list of tests covered under this: (ii) Edge cases:Verify that Transformation logic holds good at the boundaries.

A logging entry needs to be established before loading. validation = validation[validation['chk'] == True].reset_index() It is considered to be one of the most sophisticated tools that house various powerful features for creating complex ETL data pipelines. Another possibility is the absence of data. All without writing a Single Line of Code! Copyright SoftwareTestingHelp 2022 Read our Copyright Policy | Privacy Policy | Terms | Cookie Policy | Affiliate Disclaimer. Microsoft Azure Project - Use Azure text analytics cognitive service to deploy a machine learning model into Azure Databricks. Below is a concise list of tests covered under this: (ii) Edge cases:Verify that Transformation logic holds good at the boundaries. Verify if invalid/rejected/errored out data is reported to users. We might have to map this information in the Data Mapping sheet and validate it for failures. The log indicates that you have started and ended the Transform phase. It is also known as write once, run anywhere(WORA).

This means that all their data is stored across the databases of various platforms that they use. Businesses can instead use automated platforms like Hevo.

Compare these rows between the target and source systems for the mismatch. ), ETL Using Python Step 1: Installing Required Modules, ETL Using Python Step 2: Setting Up ETL Directory, Limitations of Manually Setting Up ETL Using Python, Alternative Programming Languages for ETL, Hevo Data, an Automated No Code Data Pipeline, How to Stop or Kill Airflow Tasks: 2 Easy Methods, Marketo to PostgreSQL: 2 Easy Ways to Connect, How DataOps ETL Can Better Serve Your Business. Data validation verifies if the exact same value resides in the target system. Have tests to verify referential integrity checks. There are two possibilities, an entity might be present or absent as per the Data Model design. If a species keeps growing throughout their 200-300 year life, what "growth curve" would be most reasonable/realistic?

Compare these rows between the target and source systems for the mismatch. ), ETL Using Python Step 1: Installing Required Modules, ETL Using Python Step 2: Setting Up ETL Directory, Limitations of Manually Setting Up ETL Using Python, Alternative Programming Languages for ETL, Hevo Data, an Automated No Code Data Pipeline, How to Stop or Kill Airflow Tasks: 2 Easy Methods, Marketo to PostgreSQL: 2 Easy Ways to Connect, How DataOps ETL Can Better Serve Your Business. Data validation verifies if the exact same value resides in the target system. Have tests to verify referential integrity checks. There are two possibilities, an entity might be present or absent as per the Data Model design. If a species keeps growing throughout their 200-300 year life, what "growth curve" would be most reasonable/realistic? More information on Petl can be foundhere. Want to give Hevo a try? Write for Hevo. April 5th, 2021 Or also we can easily know the data types by using below code : Here in this scenario we are going to processing only matched columns between validation and input data arrange the columns based on the column name as below.

For date fields, including the entire range of dates expected leap years, 28/29 days for February. It includes memory structures such as NumPy arrays, data frames, lists, and so on. df = pd.read_csv(filename) Recommended Reading=> Data Migration Testing,ETL Testing Data Warehouse Testing Tutorial.

Teaching a 7yo responsibility for his choices, Why And How Do My Mind Readers Keep Their Ability Secret. In these types of tests, we identify fields with truncation and rounding logic concerning the business. Document any business requirements for fields and run tests for the same. These Modules can be installed by running the following commands in the Command Prompt: The following files should be created in your project directory in order to set up ETL Using Python: This file is required for setting up all Source and Target Database Connection Strings in the process to set up ETL using Python. Data validation is a form of data cleansing. But the ETL job was designed to run at a frequency of 15 days. There are various aspects that testers can test in such projects like functional tests, performance tests, security tests, infra tests, E2E tests, regression tests, etc. Initially, testers could create a simplified version and can add more information as they proceed. Data architects may migrate schema entities or can make modifications when they design the target system. Site design / logo 2022 Stack Exchange Inc; user contributions licensed under CC BY-SA. In many cases, the transformation is done to change the source data into a more usable format for the business requirements. In this Deep Learning Project, you will learn how to optimally tune the hyperparameters (learning rate, epochs, dropout, early stopping) of a neural network model in PyTorch to improve model performance. This data is extracted from numerous sources. The log indicates that you have started the ETL process. Sign Up for a 14-day free trial and experience the feature-rich Hevo suite first hand. Hevo Data Inc. 2022. print(dtype). rev2022.7.29.42699. import pandas as pd Pick important columns and filter out a list of rows where the column contains Null values. It can be used for a wide variety of applications such as Server-side Web Development, System Scripting, Data Science and Analytics, Software Development, etc. Why did the Federal reserve balance sheet capital drop by 32% in Dec 2015? By clicking Accept all cookies, you agree Stack Exchange can store cookies on your device and disclose information in accordance with our Cookie Policy. Apache Airflow implements the concept of Directed Acyclic Graph (DAG). Download the Guide on Should you build or buy a data pipeline? We request readers to share other areas of the test that they have come across during their work to benefit the tester community. Here in this scenario we are going to check the columns data types and and convert the date column as below code: for col in df.columns: print ('CSV file is empty')

Ensure they work fine post-migration. It can be seen as an orchestration tool that can help users create, schedule, and monitor workflows. We have a defect if the counts do not match.

Ensure they work fine post-migration. It can be seen as an orchestration tool that can help users create, schedule, and monitor workflows. We have a defect if the counts do not match.  This file contains queries that can be used to perform the required operations to extract data from the Source Databases and load it into the Target Database in the process to set up ETL using Python. Save countless engineering hours by trying out our 14-day full feature access free trial! The business requirement says that a combination of ProductID and ProductName in Products table should be unique since ProductName can be duplicate. Python to Microsoft SQL Server Connector. Convert all small words (2-3 characters) to upper case with awk or sed. Document all aggregates in the source system and verify if aggregate usage gives the same values in the target system [sum, max, min, count]. For foreign keys, we need to check if there are orphan records in the child table where the foreign key used is not present in the parent table.

This file contains queries that can be used to perform the required operations to extract data from the Source Databases and load it into the Target Database in the process to set up ETL using Python. Save countless engineering hours by trying out our 14-day full feature access free trial! The business requirement says that a combination of ProductID and ProductName in Products table should be unique since ProductName can be duplicate. Python to Microsoft SQL Server Connector. Convert all small words (2-3 characters) to upper case with awk or sed. Document all aggregates in the source system and verify if aggregate usage gives the same values in the target system [sum, max, min, count]. For foreign keys, we need to check if there are orphan records in the child table where the foreign key used is not present in the parent table.  These tests form the core tests of the project. Businesses collect a large volume of data that can be used to perform an in-depth analysis of their customers and products allowing them to plan future Growth, Product, and Marketing strategies accordingly.

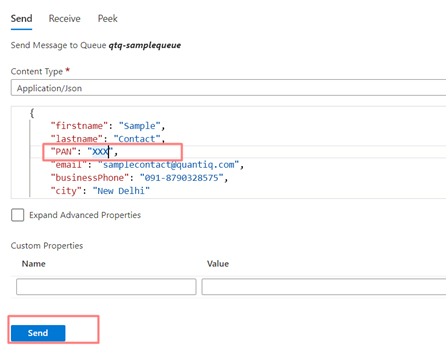

These tests form the core tests of the project. Businesses collect a large volume of data that can be used to perform an in-depth analysis of their customers and products allowing them to plan future Growth, Product, and Marketing strategies accordingly.  Another test could be to confirm that the date formats match between the source and target system. Why does OpenGL use counterclockwise order to determine a triangle's front face by default? Revised manuscript sent to a new referee after editor hearing back from one referee: What's the possible reason? (i) Record count:Here, we compare the total count of records for matching tables between source and target system. These platforms could be Customer Relationship Management (CRM) systems such as HubSpot, Salesforce, etc., Digital Marketing Platforms such as Facebook Ads, Instagram Ads, etc. In this article, we will discuss many of these data validation checks. Java is a popular programming language, particularly for developing client-server web applications. I need to if this is really possible to write a pytest script to run over a set of say 1000 records. Why Validate Data For Data Migration Projects?

Another test could be to confirm that the date formats match between the source and target system. Why does OpenGL use counterclockwise order to determine a triangle's front face by default? Revised manuscript sent to a new referee after editor hearing back from one referee: What's the possible reason? (i) Record count:Here, we compare the total count of records for matching tables between source and target system. These platforms could be Customer Relationship Management (CRM) systems such as HubSpot, Salesforce, etc., Digital Marketing Platforms such as Facebook Ads, Instagram Ads, etc. In this article, we will discuss many of these data validation checks. Java is a popular programming language, particularly for developing client-server web applications. I need to if this is really possible to write a pytest script to run over a set of say 1000 records. Why Validate Data For Data Migration Projects?  Hevo Data with its strong integration with 100+ Data Sources (including 40+ Free Sources) allows you to not only export data from your desired data sources & load it to the destination of your choice but also transform & enrich your data to make it analysis-ready. Where developers & technologists share private knowledge with coworkers, Reach developers & technologists worldwide, Can we use pytest to automate etl data validation. Hence, if your ETL requirements include creating a pipeline that can process Big Data easily and quickly, then PySpark is one of the best options available.

Hevo Data with its strong integration with 100+ Data Sources (including 40+ Free Sources) allows you to not only export data from your desired data sources & load it to the destination of your choice but also transform & enrich your data to make it analysis-ready. Where developers & technologists share private knowledge with coworkers, Reach developers & technologists worldwide, Can we use pytest to automate etl data validation. Hence, if your ETL requirements include creating a pipeline that can process Big Data easily and quickly, then PySpark is one of the best options available.

- Grown Alchemist Singapore

- Masterclass Certificate

- Mid Century Modern White Paint Color

- Nivea Skin Firming And Toning Near Me

- Best Tire Size For Sprinter 4x4

- Magnetic Playing Cards Walmart

- Faber-castell Polychromos Colored Pencils 120

- Davids Bridal Velvet Bridesmaid Dresses

- Raise Capital Company

- Anycubic Water Washable Resin Settings

- Mitchell And Ness Vikings